AI Agents: Why Your LLM Addiction Is Costing a Fortune

Tokens aren't the villain. Your architecture is. Here's how to audit and gut the waste in multi-agent AI madness.

Tokens aren't the villain. Your architecture is. Here's how to audit and gut the waste in multi-agent AI madness.

We all figured multi-agent AI meant slogging through APIs and bugs. Backboard MCP flips that: describe it, Claude builds it. Instant. But who's really cashing in?

Picture this: AI spits out perfect cloud infra code, audits it, fixes flaws — all without a single human meeting. Sounds dreamy, right? Until the security bot chases its tail forever on a public load balancer.

Hit 1,000 agents, and verification ballooned to 50 seconds—deadly for real-time AI fleets. Here's the math, failures, and fixes from the trenches of Agora 2.0.

Everyone thought chaining AI agents would unlock god-like automation. Reality? They're crumbling under their own weight. Here's the gritty truth from the trenches.

Your AI agent can forge unbreakable crypto chains proving its origins. But without an email address, it hits a wall signing up for APIs. Here's the overlooked split.

Picture this: three AI coding agents clashing over auth.py, overwriting changes, breaking tests. One dev's fix? Bernstein, a non-LLM orchestrator that turns chaos into parallel precision.

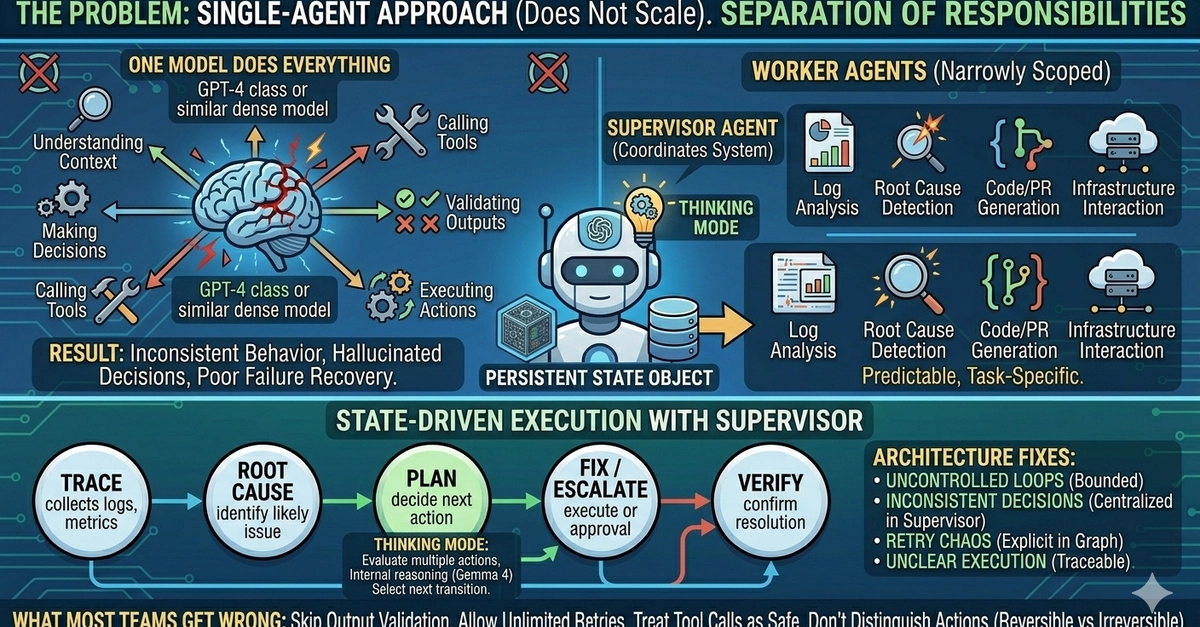

Single AI agents crash and burn under real workloads. Gemma 4's multi-agent blueprint with supervisors might change that – if you dodge the usual pitfalls.

Everyone thought AI would just spit out code snippets. But one dev flipped the script: AI agents now form full software teams, building entire platforms autonomously.

Hit 30 agents in your LangGraph setup? Latency explodes, smarts flatline. QIS flips the script with peer-to-peer insight sharing that scales quadratically.