A developer is staring at their screen, the cursor blinking mockingly. Another bug report, another convoluted stack trace. This is the daily grind. And now, the machines are supposed to fix it. Or at least, help.

And help they might, but not in the way you’d expect. The latest buzz out of the AI coding arena isn’t about a gargantuan, pay-per-token behemoth that costs an arm and a leg. No, it’s about a decidedly cheaper model, MiniMax M2.5, which, when paired with something called the Xanther Context Engine (XCE), just blew the doors off the SWE-bench Verified leaderboard. We’re talking a 78.2% resolution rate on 500 real-world Python bugs.

That’s right. Cheaper. Better. The holy trinity of tech. And it absolutely humiliates the big names. Claude 4.5 Opus, the darling of many a developer chat, clocked in at 76.8% with its ‘high reasoning’ mode enabled. The kicker? Opus costs 37 times more per call. Thirty. Seven. Times. If that doesn’t make you question every multi-billion dollar AI investment, you’re not paying attention.

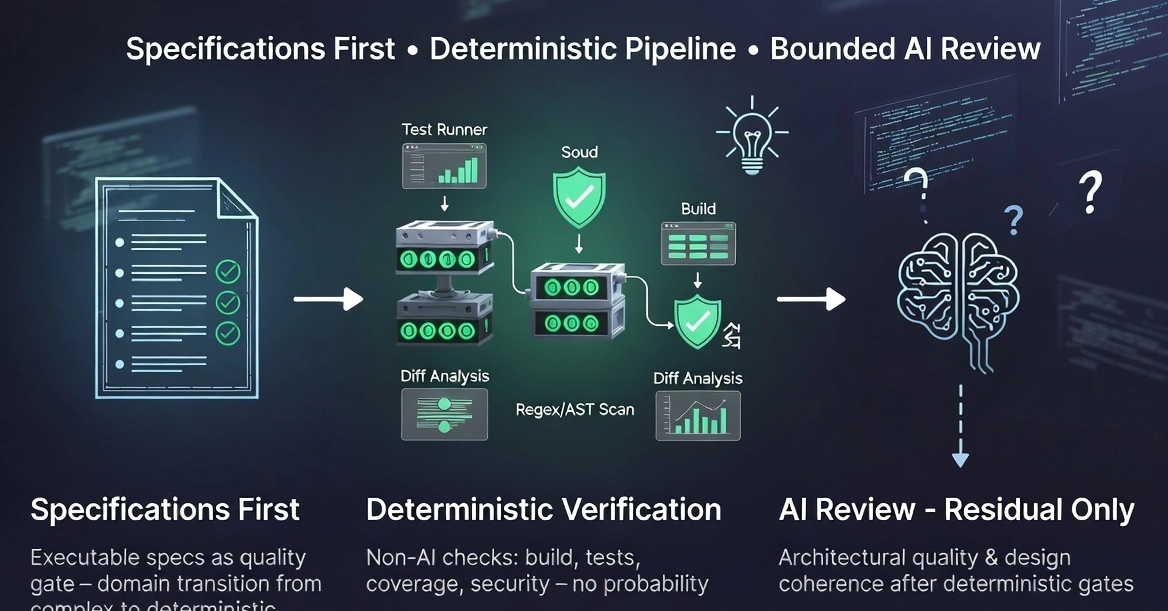

Here’s the dirty secret: the model itself isn’t the magic sauce. The original article makes this abundantly clear. The improvement — that game-changing 2.4 percentage point jump for MiniMax M2.5, and an even more dramatic 7.4 points for Sonnet 4.0 — comes entirely from better context. Not a fundamentally smarter AI. Just… better information fed to the existing one.

The Old Way vs. The New Trick

SWE-bench Verified. It’s the real deal. 500 actual bugs, plucked from the digital soil of open-source Python projects like Django and scikit-learn. We’re not talking about some contrived test case generated in a Silicon Valley echo chamber. These are repositories with tens of thousands of stars, hundreds of thousands of lines of code, and architectures that would make a seasoned engineer sweat. The agent gets the problem statement. The codebase snapshot. The gold-standard fix. And the test cases. Then it’s off to the races, with no hints, no file locations, just the raw problem.

And the old way? It was a slog. Take the example of django__django-16379. A FileBasedCache crashing on concurrent access. Without XCE, the AI agent was like a blindfolded intern. It’d find the relevant file, sure. But it wouldn’t grasp the nuances. The inheritance chains. The concurrent access patterns. It’d make a fix that broke something else. Back and forth. Fifteen file reads, thousands of tokens, and still a whiff.

The agent must read the problem statement, navigate the codebase, write a patch, and pass the test cases. No hints, no file locations, no guidance beyond the issue description.

Now, enter XCE. A simple call. xce_get_context("FileBasedCache FileNotFoundError concurrent access"). Suddenly, the agent has the blueprint. The cache backend hierarchy. The locking mechanisms. The potential race conditions. It understands the architecture. The result? A fix on the first attempt. Fewer tokens, less time, perfect execution. It’s the difference between a skilled craftsman and someone fumbling in the dark.

The Architectural Maze

This isn’t about a uniform boost across the board. XCE’s magic touch is amplified by architectural complexity. For instance, fixing a bug in SymPy, a behemoth of mathematical module dependencies, saw a 17% improvement. Why? Because a fix in one corner of SymPy ripples through dozens of others. Without understanding those deep dependencies, the AI is just guessing. XCE provides that map.

Similarly, scikit-learn’s complex estimator inheritance chains meant a 13% leap with XCE. The original article hints at this: ‘The improvement comes entirely from better context, not a better model.’ This is the core insight, folks. The models are getting good. But they’re being deployed like hammers to solve every problem. XCE is the equivalent of giving that hammer a precise set of blueprints and a trained carpenter.

Is This Just a New Coat of Paint?

The implications here are staggering. We’ve been conditioned to believe that to get smarter AI, you need bigger, more expensive models. That’s the narrative pushed by the giants. This research, if it holds up, suggests that the real innovation might lie in how we interact with and inform these models. It’s a return to first principles, in a way. Understanding the problem domain, and feeding that understanding to the tool.

This isn’t just about scoring higher on a benchmark. This is about making AI coding assistants practical for the messy reality of software development. Imagine your current coding AI, the one you maybe use for autocomplete or answering simple questions. Now imagine it actually understanding your project’s architecture. It’s no longer just a glorified search engine; it’s becoming a true collaborator. And at $0.02 per call? That’s collaboration anyone can afford.

The cost savings are monumental. Let’s do some quick math. If a project requires, say, 100 bug fixes a year, and each fix is one AI call. Without XCE, using Claude Opus at $0.75/call, that’s $75 per year. With MiniMax M2.5 and XCE at $0.22/call, it’s $22. That’s a 70% saving. For a large enterprise with thousands of developers? The numbers become astronomical.

Of course, there’s always a caveat. The benchmark is SWE-bench Verified, specifically for Python. Real-world development involves multiple languages, complex build systems, and human communication. But the principle remains. Context is king. And the ability to provide that context efficiently is the next frontier.

This is why the $0.02/call model scoring 78.2% on SWE-bench Verified is such a seismic event. It’s not just a number on a leaderboard; it’s a fundamental reframing of how we approach AI in software engineering. It suggests that the path to true AI-powered productivity isn’t always paved with bigger, more expensive models, but with smarter, more informed ones. And that’s a message every developer, and every CTO, needs to hear.

🧬 Related Insights

- Read more: DeFiLlama’s Blind Spots: 5 APIs That Deliver What It Can’t

- Read more: PassStore: Open-Source macOS Lifeline for Devs Drowning in Secrets

Frequently Asked Questions

Will this replace human developers? No. While AI coding tools are improving rapidly, they currently excel at specific tasks like bug fixing within defined parameters. Complex problem-solving, architectural design, and strategic decision-making still require human expertise and creativity. Think of it as a powerful assistant, not a replacement.

How does the Xanther Context Engine (XCE) actually provide context? While the original article doesn’t detail the internal workings of XCE, it implies it extracts and structures relevant architectural information from the codebase and problem statement. This context is then fed to the AI model to enhance its understanding of the project’s structure and dependencies.