For organizations scrambling to build sophisticated visual RAG pipelines — think invoice extraction, contract analysis, or automated document understanding — the cost and complexity of running state-of-the-art models have been a persistent bottleneck. But a recent benchmark on the AMD Developer Cloud suggests a dramatic shift in that calculus. A 72-billion parameter vision-language model, Qwen2-VL-72B, has been successfully loaded and served at full precision on a single AMD Instinct MI300X GPU, clocking in at a startlingly low $1.99 per hour. This isn’t just about running a model; it’s about making powerful AI accessible and economically viable for a broader range of applications.

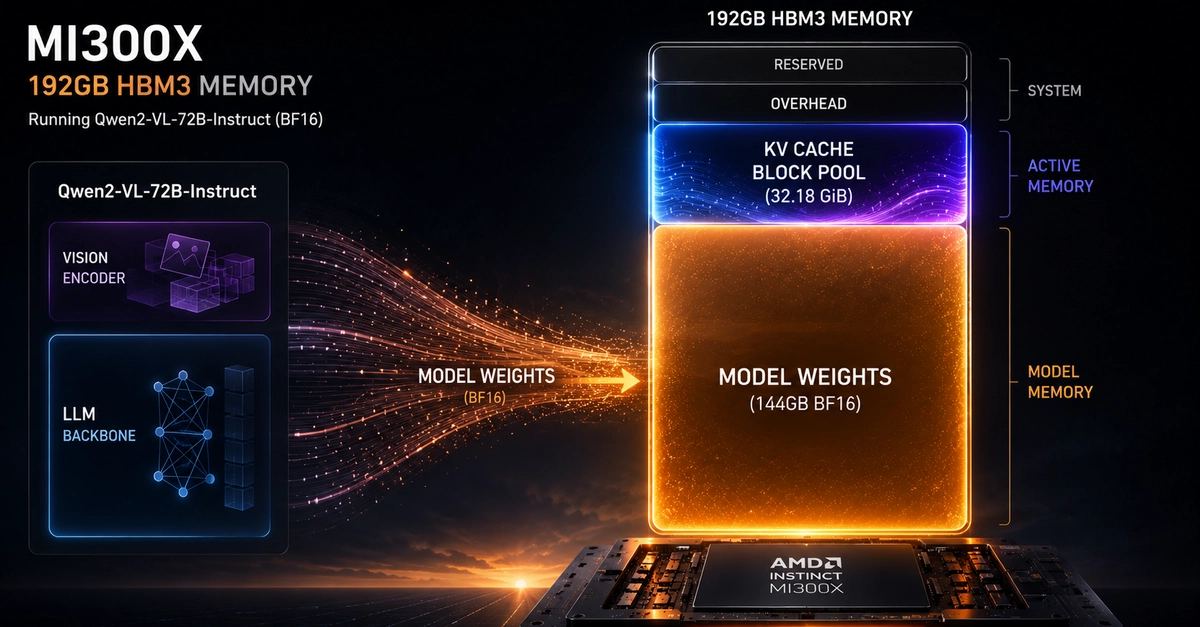

The core challenge has always been VRAM. A model with 72 billion parameters, when loaded in BF16 precision, demands roughly 144GB of memory. Traditionally, this forces developers onto NVIDIA’s 80GB A100 or H100 GPUs, which necessitates aggressive 4-bit quantization. This quantization, while saving memory, severely compromises the critical OCR accuracy and multimodal reasoning capabilities essential for tasks like document processing.

The MI300X Advantage: More VRAM, Less Hassle

The AMD Instinct MI300X, boasting a substantial 192GB of HBM3 memory, fundamentally alters the deployment landscape. It’s large enough to accommodate the entire unquantized Qwen2-VL-72B model and still offers approximately 48GB of headroom. This surplus isn’t just buffer; it’s the crucial space required for KV caches, enabling concurrent workloads and larger context windows without succumbing to Out-of-Memory errors. The implication? No more sacrificing model fidelity for cost-effectiveness.

And let’s talk about cost. Evaluating hardware is never just about the sticker price; it’s about the cost per usable gigabyte of VRAM. On NVIDIA’s ecosystem, to get the necessary 144GB+ for an unquantized 72B model, you’re typically looking at a multi-GPU setup. This means either two A100s (80GB each) or H100s (80GB each). Standard cloud pricing for these configurations, even from cost-conscious providers like Lambda Cloud or CoreWeave, can range from $3-$8 per hour per card. Add to that the performance penalty of tensor parallelism (TP=2) – the inter-GPU communication overhead that inflates latency – and the total cost of ownership escalates dramatically. The MI300X, at $1.99 per hour, doesn’t just offer a discount; it fundamentally simplifies the infrastructure by eliminating the need for multi-GPU sharding and its associated communication drag. The memory subsystem here, with 5.3 TB/s bandwidth, appears to deliver on its promise, with reported inter-token latencies of 43-66ms validating its performance.

Why Does This Matter for Enterprise RAG?

For businesses looking to scale visual RAG systems – applications that ingest and understand documents, extract data, and provide intelligent answers – this development is a game-changer. The economics have shifted. Instead of viewing advanced multimodal inference as a significant capital or operational expenditure, it’s becoming a more manageable cost center. This opens the door for smaller organizations or those with tighter budgets to implement sophisticated AI solutions that were previously out of reach. Imagine automating complex legal document review or real-time invoice processing across an entire enterprise – the ROI potential just got a significant boost.

The setup itself, as detailed in the benchmark, highlights the practical steps. It involved provisioning an Ubuntu 22.04 instance with ROCm 7.2.0 on the AMD Developer Cloud, featuring the 192GB MI300X GPU. Crucially, the guide emphasizes directing the HuggingFace cache to a large NVMe SSD. This is not a minor detail; it accelerates model loading from minutes to seconds, an essential optimization for rapid development and deployment cycles. The provided script for formatting and mounting the NVMe drive is a clear indicator of the practical, hands-on approach taken.

“192GB of HBM3 memory, it fits the full unquantized model on a single GPU and leaves 48GB of headroom for KV caches and concurrent workloads.”

This quote encapsulates the core technical advantage. The headroom is key. It’s the difference between a model that barely runs and one that can handle the demands of real-world, concurrent inference tasks. It allows for base64-encoded images to be processed without issues, for longer context windows to be maintained during conversations, and for batch requests to be processed efficiently. This isn’t just about fitting the model; it’s about making it usable at scale.

A Historical Parallel: The Democratization of Compute

This MI300X benchmark echoes the early days of cloud computing and the subsequent rise of powerful, yet accessible, single-board computers like the Raspberry Pi. In each instance, a significant technological barrier—previously requiring substantial investment and specialized knowledge—becomes democratized. For decades, high-performance computing for AI meant expensive, specialized clusters. Now, the path to running large, unquantized models is being paved by hardware like the MI300X, lowering the barrier to entry considerably. It’s a signal that the era of GPU compute scarcity for cutting-edge AI might be receding, replaced by a focus on optimized deployment and cost-effective scaling.

The implications for open-source models are particularly potent. As models like Qwen2-VL-72B become more capable, their accessibility on cost-effective hardware ensures that the innovation driven by the open-source community can be more readily adopted and iterated upon by a wider array of developers and businesses. This isn’t just about one benchmark; it’s about a fundamental shift in how powerful AI can be deployed, promising a more dynamic and competitive landscape for AI development.

What Does This Mean for the Average User?

While direct end-users might not immediately see the MI300X, the ripple effect is significant. More accessible and affordable AI infrastructure means faster development of AI-powered applications. Expect to see more sophisticated chatbots, smarter document analysis tools, and more intelligent visual search capabilities emerge, all potentially at lower price points or with enhanced features due to the reduced inference costs. It’s a quiet revolution happening in the data centers, with tangible benefits for the tools we use daily.

🧬 Related Insights

- Read more: Tiny Linux Giants: The Base Images Shrinking Your Container Empire

- Read more: High Schooler Storms KubeCon: Real Talk from a Teen Speaker on Open Source’s Future

Frequently Asked Questions

What is Qwen2-VL-72B?

Qwen2-VL-72B is a large, open-weights vision-language model developed by Alibaba. It’s designed to understand and process both text and images, making it suitable for complex tasks like document analysis and image captioning.

Does this mean I can run a 72B model on my home PC?

While this benchmark shows impressive efficiency, running a 72B model even unquantized on a single MI300X requires specialized hardware not found in typical consumer PCs. However, the cost reduction for cloud inference could eventually lead to more affordable AI services for consumers.

Is the AMD MI300X replacing NVIDIA GPUs for AI?

It’s more accurate to say AMD is becoming a strong competitor. The MI300X’s large VRAM capacity and competitive pricing offer a compelling alternative for specific workloads, especially those benefiting from unquantized models and single-GPU deployments.